| Exam Name: | Designing and Implementing a Data Science Solution on Azure | ||

| Exam Code: | DP-100 Dumps | ||

| Vendor: | Microsoft | Certification: | Microsoft Azure |

| Questions: | 525 Q&A's | Shared By: | ned |

You use Azure Machine Learning to train a model. You must use Bayesian sampling to tune hyperparameters. You need to select a ieaming_rate parameter distribution.

Which two distributions can you use? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

You are creating a classification model for a banking company to identify possible instances of credit card fraud. You plan to create the model in Azure Machine Learning by using automated machine learning.

The training dataset that you are using is highly unbalanced.

You need to evaluate the classification model.

Which primary metric should you use?

You manage an Azure Machine Learning workspace. The development environment for managing the workspace is configured to use Python SDK v2 in Azure Machine Learning Notebooks

A Synapse Spark Compute is currently attached and uses system-assigned identity

You need to use Python code to update the Synapse Spark Compute to use a user-assigned identity.

Solution: Create an instance of the MICIient class.

Does the solution meet the goal?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

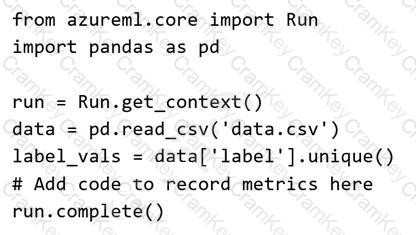

You plan to use a Python script to run an Azure Machine Learning experiment. The script creates a reference to the experiment run context, loads data from a file, identifies the set of unique values for the label column, and completes the experiment run:

The experiment must record the unique labels in the data as metrics for the run that can be reviewed later.

You must add code to the script to record the unique label values as run metrics at the point indicated by the comment.

Solution: Replace the comment with the following code:

run.log_list( ' Label Values ' , label_vals)

Does the solution meet the goal?