| Exam Name: | AWS Certified Machine Learning Engineer - Associate | ||

| Exam Code: | MLA-C01 Dumps | ||

| Vendor: | Amazon Web Services | Certification: | AWS Certified Associate |

| Questions: | 230 Q&A's | Shared By: | brooklyn |

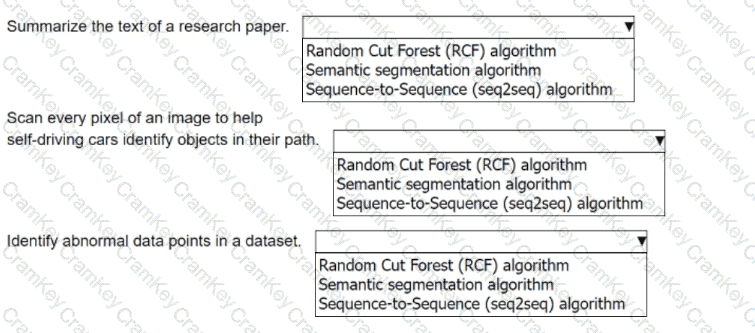

An ML engineer must choose the appropriate Amazon SageMaker algorithm to solve specific AI problems.

Select the correct SageMaker built-in algorithm from the following list for each use case. Each algorithm should be selected one time.

• Random Cut Forest (RCF) algorithm

• Semantic segmentation algorithm

• Sequence-to-Sequence (seq2seq) algorithm

An ML engineer is preparing a dataset that contains medical records to train an ML model to predict the likelihood of patients developing diseases.

The dataset contains columns for patient ID, age, medical conditions, test results, and a "Disease" target column.

How should the ML engineer configure the data to train the model?

A company is building a near real-time data analytics application to detect anomalies and failures for industrial equipment. The company has thousands of IoT sensors that send data every 60 seconds. When new versions of the application are released, the company wants to ensure that application code bugs do not prevent the application from running.

Which solution will meet these requirements?

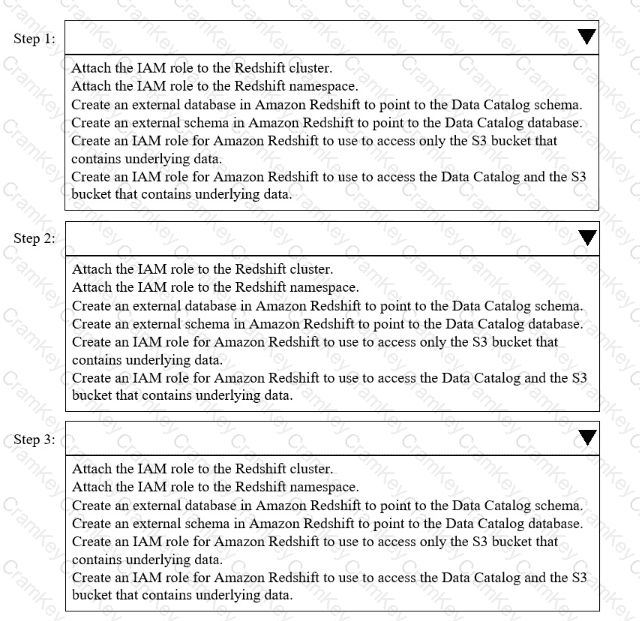

A company needs to combine data from multiple sources. The company must use Amazon Redshift Serverless to query an AWS Glue Data Catalog database and underlying data that is stored in an Amazon S3 bucket.

Select and order the correct steps from the following list to meet these requirements. Select each step one time or not at all. (Select and order three.)

• Attach the IAM role to the Redshift cluster.

• Attach the IAM role to the Redshift namespace.

• Create an external database in Amazon Redshift to point to the Data Catalog schema.

• Create an external schema in Amazon Redshift to point to the Data Catalog database.

• Create an IAM role for Amazon Redshift to use to access only the S3 bucket that contains underlying data.

• Create an IAM role for Amazon Redshift to use to access the Data Catalog and the S3 bucket that contains underlying data.